As discussed earlier, I want to add parallel compression to MetaIO and NRRD. As NRRD is bundled with other stuff and ITK’s version is not up to date, I plan to work on MetaIO first.

I checked PIGZ, Mark Adler’s tool for parallel, backwards compatible zlib compression. However, that is a tool and not a library, it only does parallel compression - decompression is still single threaded, and Windows support is still on a TODO list.

In addition to (single-threaded) zlib which is already used in ITK, I also examined a more modern compression library zstd and meta-compressor blosc. @neurolabusc suggested to use blosc instead of pure zstd, and my simple benchmark shows blosc+zstd to be better than pure zstd for 16-bit short data (which is the most common).

Explanation of the benchmark

6 different images were used, representative of type of data we encounter.

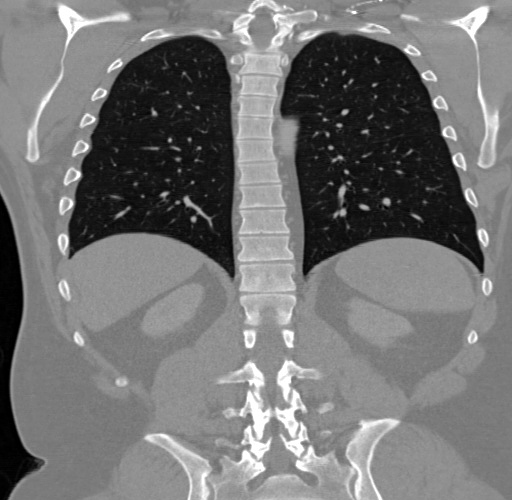

WB - “whole body” CT, once upon a time downloaded from hcuge.ch.

wb-seg - watershed segmentation of WB, created as a challenging test data for RLEImage class. Available here.

MRA - chest MR angiography, downloaded from hcuge.ch.

wbPET - “whole body” PET, from private collection.

CBCT - dental cone beam CT, available here.

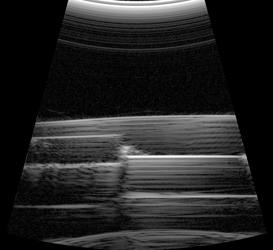

US - a large 2D ultrasound sweep of some phantom, from private collection.

Source code of the benchmark is here:

Tester.zip (1.9 KB)

blosc_compress has a typesize parameter. I kept that mostly at 2, as majority of test images are 16-bit shorts. Additionally I tested with 1 for uchar, and 1 and 8 for double. The tests were run on 8-core Ryzen 7 Pro 1700 @ 3.0GHz. 8 threads were used. Note: using 16 hyper-threaded cores does not increase the speed significantly.

Results are here:

CompressionBenchmark.xlsx (58.4 KB)

CompressionBenchmark.ods (14.7 KB)

Conclusions

All of the examined alternatives are faster than zlib on low and medium compression levels, even in single threaded mode. The difference is even more apparent in multi-threaded mode.

I am currently leaning towards using blosc+zstd on compression level 2 as the default. Also, I think we should expose SetCompressionLevel in itk::ImageIOBase, which is currently implemented only by a few IOs (e.g. PNG, MINC). @blowekamp suggested also exposing SetCompressor, which would allow programatically preserving backwards compatibility if so desired. A configure-time option for backwards compatible compression is already planned.

Before I start the heavy lifting part of refactoring, are there any suggestions or comments? Everyone is welcome, but these people might have more interest in commenting: @hjmjohnson @glk @brad.king @Stephen_Aylward @fbudin @seanm @jhlegarreta @Niels_Dekker @phcerdan @zivy @bill.lorensen @jcfr @lassoan