Absolutely loving this thread. Thanks for kicking off such a thoughtful discussion

I very much agree that the human side of our collaboration is paramount, and deserves explicit protection and celebration. Code review and issues are a big part of how we build trust, mentorship, and shared ownership in ITK, and I’d really like to keep those interactions social, relational, and human-to-human, with AI as background tooling rather than a “participant” in the conversation.

I’m also very guilty myself of anthropomorphizing AI agents.  It’s so easy to slip into that because the “API” is natural language, but the reviews these systems give are still rule-driven computational models, reflecting patterns from their training data and our existing codebases, not intentional human judgement. We do need to guard against treating them as people; they don’t need us to be “friendly” in the social sense (though there’s no reason to be rude either!), and we shouldn’t let politeness to a tool dilute the clarity of our technical decisions.

It’s so easy to slip into that because the “API” is natural language, but the reviews these systems give are still rule-driven computational models, reflecting patterns from their training data and our existing codebases, not intentional human judgement. We do need to guard against treating them as people; they don’t need us to be “friendly” in the social sense (though there’s no reason to be rude either!), and we shouldn’t let politeness to a tool dilute the clarity of our technical decisions.

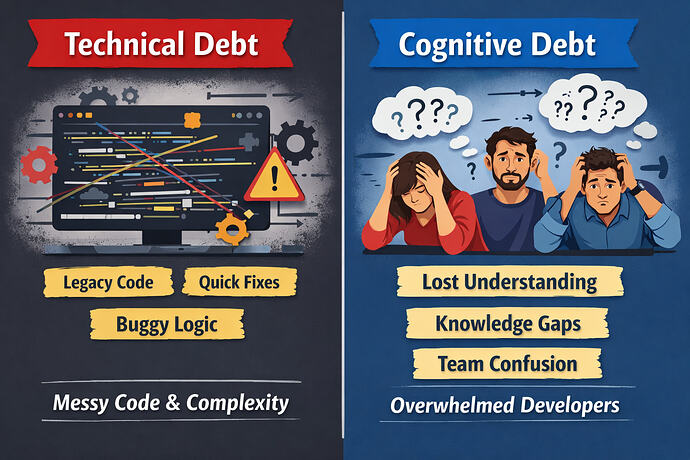

For that reason, I think it’s helpful to mentally file AI review agents alongside linters, compilers, and integration tests. They’re extremely useful, often catch real issues, and can make us more productive and improve code quality. But, just like any other tool, their feedback will never be 100% correct, and occasional false positives or misunderstandings are expected, not a crisis. We should treat AI comments as strong hints or hypotheses to evaluate, not as authoritative verdicts.

We should also stay mindful of the energy cost of all this. Running heavy models on every commit has a real environmental and financial footprint, so designing our workflows to get high value from AI per unit of compute (e.g., scoping when and how we trigger reviews) feels important.

On the positive side, the quality and efficiency of AI code review is moving quickly. Claude Code just today launched a dedicated multi‑agent Code Review system that automatically reviews each PR and leaves inline comments where it finds likely issues, modeled on Anthropic’s internal workflows for nearly every PR. GitHub Copilot’s code review features are also maturing, with Copilot acting as a reviewer that can leave comments and even help implement changes via follow‑up actions. And there’s a broader ecosystem that’s been evolving for years.

That diversity of input is valuable, including among AI tools themselves. I can imagine a future where, for well‑understood parts of the ITK codebase and workflows that the team is comfortable with, it might be reasonable to auto‑enable AI reviews by default because we trust both the tools and our patterns for interpreting them. But even if/when we get there, I’d still want human reviewers at the center. AI provides instrumentation and safety rails. Humans do the actual collaboration and make the final call.